At around noon on March 4 in Beijing, Alibaba’s Tongyi Lab convened an emergency all-hands meeting. Eddie Wu, CEO of Alibaba Group, addressed employees working on the Qwen model family.

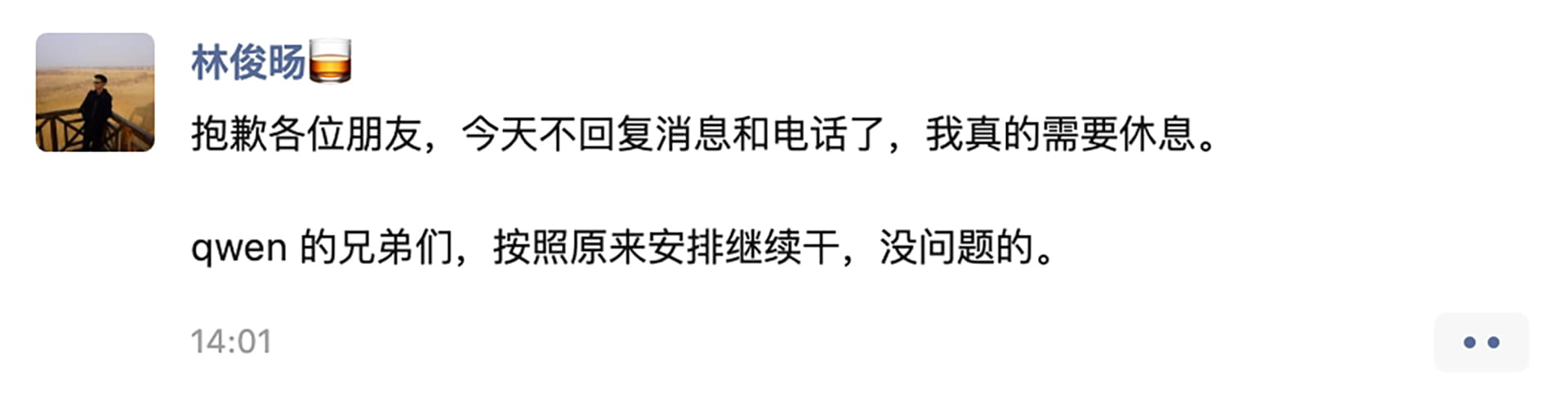

The meeting followed events that unfolded about 12 hours earlier. Lin Junyang, head of large model technology at Alibaba’s Qwen unit, abruptly announced on X that he was resigning:

me stepping down. bye my beloved qwen.

— Junyang Lin (@JustinLin610) March 3, 2026

Lin, a core architect behind Alibaba’s open-source artificial intelligence models and one of the company’s youngest senior executives, had been widely viewed as the main driver of Qwen’s technical direction. As news spread across the industry, many inside the team struggled to process the sudden departure of someone they considered its spiritual leader.

More than one Qwen team member told 36Kr that Lin’s leadership was a central reason the group achieved its current progress, particularly given that the unit operated with fewer resources than some competitors.

During the meeting, Qwen members, represented by reinforcement learning lead Liu Dayiheng, raised a series of pointed questions to Alibaba’s senior management. The discussion covered team restructuring, the arrival of new member Hao Zhou from Google DeepMind, decisions about the model roadmap, and future resource allocation.

Executives from Alibaba, members of the Qwen team, and staff from other Tongyi Lab units attended the session. On issues including organizational restructuring and strategic direction, Wu, Alibaba chief people officer Jiang Fang, and Alibaba Cloud CTO Zhou Jingren responded to employees’ questions.

Management’s central message was that Qwen is not being scaled back. Executives described the restructuring as an expansion unrelated to internal political disputes, and said it would require additional resources rather than reductions.

During the meeting, Jiang acknowledged that internal communication had been insufficient. She said the adjustments are intended to bring in additional talent and provide more resources to support the team’s expansion.

Rumors had circulated that Hao Zhou would directly replace Lin and take over his team. However, 36Kr has learned that Zhou’s formal role and reporting structure remain under discussion.

Executives repeatedly emphasized that the development of Qwen’s foundational model is currently Alibaba’s top priority. Competition in large models, they said, is not solely Qwen’s responsibility. Both foundational model R&D and the construction of supporting infrastructure will be coordinated at the group level.

Zhou Jingren also addressed sharper questions related to hiring quotas and computing capacity. Some employees asked why external customers, including startups, can purchase computing power through Alibaba Cloud without difficulty while internal teams face constraints in both compute and headcount.

Zhou said the team has long operated under resource limitations. The disparity between internal and external access to computing resources, he said, stems from several historical factors. He added that the company is working on a broader plan but did not provide further details.

No definitive update about Lin’s future emerged from the meeting.

Just days earlier, Alibaba had completed a new round of strategic adjustments, unifying its AI branding under Qwen and initiating another phase of organizational restructuring.

Previously, Qwen maintained separate teams for pre-training, post-training, and infrastructure. Across modalities, the group worked on language models, multimodal systems, and coding-related models.

Training single-modality models was once standard across the industry. As demand for visual understanding increased, however, vision-language models gained prominence, accelerating integration across different modalities.

According to a person familiar with the matter, Lin had been pushing since 2025 to integrate teams working on language, image, video, and code in order to improve training efficiency. The Qwen team at one point proposed merging with the Wan team but the proposal did not succeed. Instead, the group developed its own Qwen-Image model.

Under the latest restructuring, Tongyi Lab is reportedly planning to divide Qwen along functional lines, including pre-training, post-training, visual understanding, and image generation. These units would then be merged with other teams within the lab, including those overseeing Wan and Bailing. Several employees said that limited communication about the changes contributed to internal tension.

“At least a USD 100 million talent”

On the evening of March 2, Qwen announced on X that it was open-sourcing four small Qwen 3.5 models designed for devices such as smartphones. Elon Musk liked the post and commented:

Impressive intelligence density

— Elon Musk (@elonmusk) March 2, 2026

Lin’s resignation announcement soon afterward unsettled Alibaba’s AI division.

After Zhou Chang, the former head of Qwen technology, departed, Lin, who was born in 1993, took over leadership of the team in 2022 and directed its technical development.

In the years since, the Qwen model family expanded rapidly, evolving from the original Tongyi models to Qwen 2.5 and Qwen 3.5 Max. The lineup is widely considered competitive among open-source models.

Several former members of Alibaba’s model team previously told 36Kr that in 2023, when China’s large model wave was just beginning, major technology companies debated whether to open source their models and how aggressively to pursue the strategy. They said Alibaba’s early commitment to open source reflected the push and execution of leaders including Zhou Chang and Lin Junyang.

Alongside Lin, several members of the Qwen team also announced their resignations, including key leads responsible for different model domains. Several younger researchers reportedly submitted resignation letters on the same day.

“Qwen is nothing without its people,” one wrote, echoing a phrase widely circulated by OpenAI employees during the company’s 2024 leadership turmoil.

Qwen is nothing without its people https://t.co/9t4ysMcufP

— Chujie Zheng (@ChujieZheng) March 3, 2026

Lin’s announcement triggered responses across the global AI community. Many overseas developers expressed appreciation for his contributions to Qwen’s open-source development. “End of an era,” Yuchen Jin, founder and CTO of Hyperbolic Labs, wrote on X.

End of an era. Qwen lost its tech lead.

When we launched the Qwen 3 Next endpoints on Hyperbolic with Junyang and his team, they were still online at 6am Beijing time!

Thank you for pushing open source AI forward. Wishing you the best, @JustinLin610! https://t.co/OhldOsjQas

— Yuchen Jin (@Yuchenj_UW) March 3, 2026

“If this group really leaves, Qwen could be delayed by at least six months to a year while the team is rebuilt and retrained,” one investor told 36Kr. An AI executive at ByteDance described Lin as “at least a USD 100 million talent.” The valuation reflects personal opinion rather than a measurable market benchmark.

Rumors circulated suggesting Lin’s departure was involuntary. However, 36Kr confirmed that Lin submitted his resignation on March 3 and had not finalized details with Alibaba as of March 4, when Qwen employees first learned of the news.

According to information obtained by 36Kr, Alibaba’s senior management remains in close communication with Lin. Whether he will ultimately leave the company remains unclear.

The person expected to take over Qwen’s post-training work is Hao Zhou, formerly of Google DeepMind. A Qwen insider told 36Kr that Zhou briefly joined Quark in January 2026 before transferring to Tongyi Lab. He reports directly to Zhou Jingren, and many employees believe he will lead Qwen’s post-training work.

Zhou earned his bachelor’s degree from the University of Science and Technology of China and a PhD from the University of Wisconsin-Madison. According to his LinkedIn profile, he spent three years at Meta and about four years at Google DeepMind. He contributed to Gemini 3.0 and worked on multi-step reinforcement learning systems involving tool use and chain-of-thought reasoning.

Alibaba won goodwill through open source, but wants more

On March 3, Lin had just unveiled several additional small open-source models on X, continuing Qwen’s longstanding open-source strategy.

Some observers characterize open source as a form of corporate charity. That interpretation does not fully capture the strategy’s underlying rationale.

For Alibaba, open sourcing serves the developer ecosystem surrounding Alibaba Cloud. By releasing models early, the Qwen family received rapid community feedback during its formative stages. That feedback helped accelerate iteration and fed improvements back into training cycles.

Qwen’s broad coverage across model sizes and modalities also allowed enterprises and universities to quickly adopt suitable versions. As those models moved into production environments, some organizations chose to purchase related services from Alibaba Cloud, indirectly contributing to the platform’s revenue.

Still, the financial value of open source remains difficult to measure. The debate continues globally. Meta has spent billions of USD training its Llama models and released them publicly, yet the direct financial returns are difficult to identify in financial statements.

Despite goodwill from its open-source efforts, Alibaba’s closed-source flagship models face increasing pressure. Its 2025 releases, including Qwen 3 and Qwen 3.5, remain competitive but show signs of strain against rapidly advancing rivals.

Much of the current turbulence appears linked to rapid changes in Alibaba’s AI strategy, which some employees say diverge from the priorities of the foundational model team. This characterization reflects employee perspectives and has not been independently confirmed by Alibaba.

Pursuing frontier-level flagship models while maintaining leadership in open source requires substantial resources. Yet Alibaba’s foundational model team remains relatively small.

Since 2023, the Qwen family has open-sourced more than 400 models, spanning parameter sizes from 500 million to 235 billion. The core Qwen team consists of slightly more than 100 people. Including other Tongyi Lab teams, the total number of staff involved reaches only a few hundred.

By comparison, ByteDance’s Seed team, responsible for foundational model training, reportedly has close to 2,000 employees. Across most directions, Alibaba’s headcount is significantly smaller than that of some competitors. Multiple Qwen insiders previously told 36Kr that computing capacity and infrastructure support have long been limited, slowing model iteration.

These tensions reflect a broader moment in Alibaba’s accelerating AI strategy. The Qwen app launched in November 2025, beginning a push during the Lunar New Year period as major companies compete for consumer adoption. ByteDance’s Doubao is approaching 200 million daily active users, while Tencent has yet to fully mobilize its competing products.

At the same time, Alibaba cannot afford to fall behind in flagship model development. Those models underpin Alibaba Cloud’s commercialization strategy and may play a central role in the company’s long-term direction.

KrASIA features translated and adapted content that was originally published by 36Kr. This article was written by Deng Yongyi, with contributions from Zhou Xinyu, for 36Kr.